Summary

Artificial intelligence (AI) will transform the world later this century. I expect this transition will be a "soft takeoff" in which many sectors of society update together in response to incremental AI developments, though the possibility of a harder takeoff in which a single AI project "goes foom" shouldn't be ruled out. If a rogue AI gained control of Earth, it would proceed to accomplish its goals by colonizing the galaxy and undertaking some very interesting achievements in science and engineering. On the other hand, it would not necessarily respect human values, including the value of preventing the suffering of less powerful creatures. Whether a rogue-AI scenario would entail more expected suffering than other scenarios is a question to explore further. Regardless, the field of AI ethics and policy seems to be a very important space where altruists can make a positive-sum impact along many dimensions. Expanding dialogue and challenging us-vs.-them prejudices could be valuable.

Other versions

*Several of the new written sections of this piece are absent from the podcast because I recorded it a while back.

Introduction

This piece contains some observations on what looks to be potentially a coming machine revolution in Earth's history. For general background reading, a good place to start is Wikipedia's article on the technological singularity.

I am not an expert on all the arguments in this field, and my views remain very open to change with new information. In the face of epistemic disagreements with other very smart observers, it makes sense to grant some credence to a variety of viewpoints. Each person brings unique contributions to the discussion by virtue of his or her particular background, experience, and intuitions.

To date, I have not found a detailed analysis of how those who are moved more by preventing suffering than by other values should approach singularity issues. This seems to me a serious gap, and research on this topic deserves high priority. In general, it's important to expand discussion of singularity issues to encompass a broader range of participants than the engineers, technophiles, and science-fiction nerds who have historically pioneered the field.

I. J. Good observed in 1982: "The urgent drives out the important, so there is not very much written about ethical machines". Fortunately, this may be changing.

Is "the singularity" crazy?

In fall 2005, a friend pointed me to Ray Kurzweil's The Age of Spiritual Machines. This was my first introduction to "singularity" ideas, and I found the book pretty astonishing. At the same time, much of it seemed rather implausible to me. In line with the attitudes of my peers, I assumed that Kurzweil was crazy and that while his ideas deserved further inspection, they should not be taken at face value.

In 2006 I discovered Nick Bostrom and Eliezer Yudkowsky, and I began to follow the organization then called the Singularity Institute for Artificial Intelligence (SIAI), which is now MIRI. I took SIAI's ideas more seriously than Kurzweil's, but I remained embarrassed to mention the organization because the first word in SIAI's name sets off "insanity alarms" in listeners.

I began to study machine learning in order to get a better grasp of the AI field, and in fall 2007, I switched my college major to computer science. As I read textbooks and papers about machine learning, I felt as though "narrow AI" was very different from the strong-AI fantasies that people painted. "AI programs are just a bunch of hacks," I thought. "This isn't intelligence; it's just people using computers to manipulate data and perform optimization, and they dress it up as 'AI' to make it sound sexy." Machine learning in particular seemed to be just a computer scientist's version of statistics. Neural networks were just an elaborated form of logistic regression. There were stylistic differences, such as computer science's focus on cross-validation and bootstrapping instead of testing parametric models -- made possible because computers can run data-intensive operations that were inaccessible to statisticians in the 1800s. But overall, this work didn't seem like the kind of "real" intelligence that people talked about for general AI.

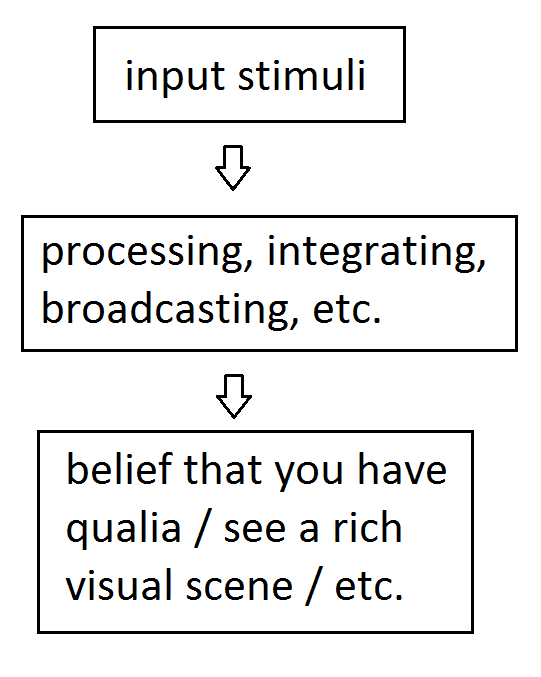

This attitude began to change as I learned more cognitive science. Before 2008, my ideas about human cognition were vague. Like most science-literate people, I believed the brain was a product of physical processes, including firing patterns of neurons. But I lacked further insight into what the black box of brains might contain. This led me to be confused about what "free will" meant until mid-2008 and about what "consciousness" meant until late 2009. Cognitive science showed me that the brain was in fact very much like a computer, at least in the sense of being a deterministic information-processing device with distinct algorithms and modules. When viewed up close, these algorithms could look as "dumb" as the kinds of algorithms in narrow AI that I had previously dismissed as "not really intelligence." Of course, animal brains combine these seemingly dumb subcomponents in dazzlingly complex and robust ways, but I could now see that the difference between narrow AI and brains was a matter of degree rather than kind. It now seemed plausible that broad AI could emerge from lots of work on narrow AI combined with stitching the parts together in the right ways.

So the singularity idea of artificial general intelligence seemed less crazy than it had initially. This was one of the rare cases where a bold claim turned out to look more probable on further examination; usually extraordinary claims lack much evidence and crumble on closer inspection. I now think it's quite likely (maybe ~75%) that humans will produce at least a human-level AI within the next ~300 years conditional on no major disasters (such as sustained world economic collapse, global nuclear war, large-scale nanotech war, etc.), and also ignoring anthropic considerations.

The singularity is more than AI

The "singularity" concept is broader than the prediction of strong AI and can refer to several distinct sub-meanings. Like with most ideas, there's a lot of fantasy and exaggeration associated with "the singularity," but at least the core idea that technology will progress at an accelerating rate for some time to come, absent major setbacks, is not particularly controversial. Exponential growth is the standard model in economics, and while this can't continue forever, it has been a robust pattern throughout human and even pre-human history.

MIRI emphasizes AI for a good reason: At the end of the day, the long-term future of our galaxy will be dictated by AI, not by biotech, nanotech, or other lower-level systems. AI is the "brains of the operation." Of course, this doesn't automatically imply that AI should be the primary focus of our attention. Maybe other revolutionary technologies or social forces will come first and deserve higher priority. In practice, I think focusing on AI specifically seems quite important even relative to competing scenarios, but it's good to explore many areas in parallel to at least a shallow depth.

In addition, I don't see a sharp distinction between "AI" and other fields. Progress in AI software relies heavily on computer hardware, and it depends at least a little bit on other fundamentals of computer science, like programming languages, operating systems, distributed systems, and networks. AI also shares significant overlap with neuroscience; this is especially true if whole brain emulation arrives before bottom-up AI. And everything else in society matters a lot too: How intelligent and engineering-oriented are citizens? How much do governments fund AI and cognitive-science research? (I'd encourage less rather than more.) What kinds of military and commercial applications are being developed? Are other industrial backbone components of society stable? What memetic lenses does society have for understanding and grappling with these trends? And so on. The AI story is part of a larger story of social and technological change, in which one part influences other parts.

Significant trends in AI may not look like the AI we see in movies. They may not involve animal-like cognitive agents as much as more "boring", business-oriented computing systems. Some of the most transformative computer technologies in the period 2000-2014 have been drones, smart phones, and social networking. These all involve some AI, but the AI is mostly used as a component of a larger, non-AI system, in which many other facets of software engineering play at least as much of a role.

Nonetheless, it seems nearly inevitable to me that digital intelligence in some form will eventually leave biological humans in the dust, if technological progress continues without faltering. This is almost obvious when we zoom out and notice that the history of life on Earth consists in one species outcompeting another, over and over again. Ecology's competitive exclusion principle suggests that in the long run, either humans or machines will ultimately occupy the role of the most intelligent beings on the planet, since "When one species has even the slightest advantage or edge over another then the one with the advantage will dominate in the long term."

Will society realize the importance of AI?

The basic premise of superintelligent machines who have different priorities than their creators has been in public consciousness for many decades. Arguably even Frankenstein, published in 1818, expresses this basic idea, though more modern forms include 2001: A Space Odyssey (1968), The Terminator (1984), I, Robot (2004), and many more. Probably most people in Western countries have at least heard of these ideas if not watched or read pieces of fiction on the topic.

So why do most people, including many of society's elites, ignore strong AI as a serious issue? One reason is just that the world is really big, and there are many important (and not-so-important) issues that demand attention. Many people think strong AI is too far off, and we should focus on nearer-term problems. In addition, it's possible that science fiction itself is part of the reason: People may write off AI scenarios as "just science fiction," as I would have done prior to late 2005. (Of course, this is partly for good reason, since depictions of AI in movies are usually very unrealistic.) Often, citing Hollywood is taken as a thought-stopping deflection of the possibility of AI getting out of control, without much in the way of substantive argument to back up that stance. For example: "let's please keep the discussion firmly within the realm of reason and leave the robot uprisings to Hollywood screenwriters."

As AI progresses, I find it hard to imagine that mainstream society will ignore the topic forever. Perhaps awareness will accrue gradually, or perhaps an AI Sputnik moment will trigger an avalanche of interest. Stuart Russell expects that

Just as nuclear fusion researchers consider the problem of containment of fusion reactions as one of the primary problems of their field, it seems inevitable that issues of control and safety will become central to AI as the field matures.

I think it's likely that issues of AI policy will be debated heavily in the coming decades, although it's possible that AI will be like nuclear weapons -- something that everyone is afraid of but that countries can't stop because of arms-race dynamics. So even if AI proceeds slowly, there's probably value in thinking more about these issues well ahead of time, though I wouldn't consider the counterfactual value of doing so to be astronomical compared with other projects in part because society will pick up the slack as the topic becomes more prominent.

[Update, Feb. 2015: I wrote the preceding paragraphs mostly in May 2014, before Nick Bostrom's Superintelligence book was released. Following Bostrom's book, a wave of discussion about AI risk emerged from Elon Musk, Stephen Hawking, Bill Gates, and many others. AI risk suddenly became a mainstream topic discussed by almost every major news outlet, at least with one or two articles. This foreshadows what we'll see more of in the future. The outpouring of publicity for the AI topic happened far sooner than I imagined it would.]

A soft takeoff seems more likely?

Various thinkers have debated the likelihood of a "hard" takeoff -- in which a single computer or set of computers rapidly becomes superintelligent on its own -- compared with a "soft" takeoff -- in which society as a whole is transformed by AI in a more distributed, continuous fashion. "The Hanson-Yudkowsky AI-Foom Debate" discusses this in great detail. The topic has also been considered by many others, such as Ramez Naam vs. William Hertling.

For a long time I inclined toward Yudkowsky's vision of AI, because I respect his opinions and didn't ponder the details too closely. This is also the more prototypical example of rebellious AI in science fiction. In early 2014, a friend of mine challenged this view, noting that computing power is a severe limitation for human-level minds. My friend suggested that AI advances would be slow and would diffuse through society rather than remaining in the hands of a single developer team. As I've read more AI literature, I think this soft-takeoff view is pretty likely to be correct. Science is always a gradual process, and almost all AI innovations historically have moved in tiny steps. I would guess that even the evolution of humans from their primate ancestors was a "soft" takeoff in the sense that no single son or daughter was vastly more intelligent than his or her parents. The evolution of technology in general has been fairly continuous. I probably agree with Paul Christiano that "it is unlikely that there will be rapid, discontinuous, and unanticipated developments in AI that catapult it to superhuman levels [...]."

Of course, it's not guaranteed that AI innovations will diffuse throughout society. At some point perhaps governments will take control, in the style of the Manhattan Project, and they'll keep the advances secret. But even then, I expect that the internal advances by the research teams will add cognitive abilities in small steps. Even if you have a theoretically optimal intelligence algorithm, it's constrained by computing resources, so you either need lots of hardware or approximation hacks (or most likely both) before it can function effectively in the high-dimensional state space of the real world, and this again implies a slower trajectory. Marcus Hutter's AIXI(tl) is an example of a theoretically optimal general intelligence, but most AI researchers feel it won't work for artificial general intelligence (AGI) because it's astronomically expensive to compute. Ben Goertzel explains: "I think that tells you something interesting. It tells you that dealing with resource restrictions -- with the boundedness of time and space resources -- is actually critical to intelligence. If you lift the restriction to do things efficiently, then AI and AGI are trivial problems."

In "I Still Don’t Get Foom", Robin Hanson contends:

Yes, sometimes architectural choices have wider impacts. But I was an artificial intelligence researcher for nine years, ending twenty years ago, and I never saw an architecture choice make a huge difference, relative to other reasonable architecture choices. For most big systems, overall architecture matters a lot less than getting lots of detail right.

This suggests that it's unlikely that a single insight will make an astronomical difference to an AI's performance.

Similarly, my experience is that machine-learning algorithms matter less than the data they're trained on. I think this is a general sentiment among data scientists. There's a famous slogan that "More data is better data." A main reason Google's performance is so good is that it has so many users that even obscure searches, spelling mistakes, etc. will appear somewhere in its logs. But if many performance gains come from data, then they're constrained by hardware, which generally grows steadily.

Hanson's "I Still Don’t Get Foom" post continues: "To be much better at learning, the project would instead have to be much better at hundreds of specific kinds of learning. Which is very hard to do in a small project." Anders Sandberg makes a similar point:

As the amount of knowledge grows, it becomes harder and harder to keep up and to get an overview, necessitating specialization. [...] This means that a development project might need specialists in many areas, which in turns means that there is a lower size of a group able to do the development. In turn, this means that it is very hard for a small group to get far ahead of everybody else in all areas, simply because it will not have the necessary know how in all necessary areas. The solution is of course to hire it, but that will enlarge the group.

One of the more convincing anti-"foom" arguments is J. Storrs Hall's point that an AI improving itself to a world superpower would need to outpace the entire world economy of 7 billion people, plus natural resources and physical capital. It would do much better to specialize, sell its services on the market, and acquire power/wealth in the ways that most people do. There are plenty of power-hungry people in the world, but usually they go to Wall Street, K Street, or Silicon Valley rather than trying to build world-domination plans in their basement. Why would an AI be different? Some possibilities:

- By being built differently, it's able to concoct an effective world-domination strategy that no human has thought of.

- Its non-human form allows it to diffuse throughout the Internet and make copies of itself.

I'm skeptical of #1, though I suppose if the AI is very alien, these kinds of unknown unknowns become more plausible. #2 is an interesting point. It seems like a pretty good way to spread yourself as an AI is to become a useful software product that lots of people want to install, i.e., to sell your services on the world market, as Hall said. Of course, once that's done, perhaps the AI could find a way to take over the world. Maybe it could silently quash competitor AI projects. Maybe it could hack into computers worldwide via the Internet and Internet of Things, as the AI did in the Delete series. Maybe it could devise a way to convince humans to give it access to sensitive control systems, as Skynet did in Terminator 3.

I find these kinds of scenarios for AI takeover more plausible than a rapidly self-improving superintelligence. Indeed, even a human-level intelligence that can distribute copies of itself over the Internet might be able to take control of human infrastructure and hence take over the world. No "foom" is required.

Rather than discussing hard-vs.-soft takeoff arguments more here, I added discussion to Wikipedia where the content will receive greater readership. See "Hard vs. soft takeoff" in "Intelligence explosion".

The hard vs. soft distinction is obviously a matter of degree. And maybe how long the process takes isn't the most relevant way to slice the space of scenarios. For practical purposes, the more relevant question is: Should we expect control of AI outcomes to reside primarily in the hands of a few "seed AI" developers? In this case, altruists should focus on influencing a core group of AI experts, or maybe their military / corporate leaders. Or should we expect that society as a whole will play a big role in shaping how AI is developed and used? In this case, governance structures, social dynamics, and non-technical thinkers will play an important role not just in influencing how much AI research happens but also in how the technologies are deployed and incrementally shaped as they mature.

It's possible that one country -- perhaps the United States, or maybe China in later decades -- will lead the way in AI development, especially if the research becomes nationalized when AI technology grows more powerful. Would this country then take over the world? I'm not sure. The United States had a monopoly on nuclear weapons for several years after 1945, but it didn't bomb the Soviet Union out of existence. A country with a monopoly on artificial superintelligence might refrain from destroying its competitors as well. On the other hand, AI should enable vastly more sophisticated surveillance and control than was possible in the 1940s, so a monopoly might be sustainable even without resorting to drastic measures. In any case, perhaps a country with superintelligence would just economically outcompete the rest of the world, rendering military power superfluous.

Besides a single country taking over the world, the other possibility (perhaps more likely) is that AI is developed in a distributed fashion, either openly as is the case in academia today, or in secret by governments as is the case with other weapons of mass destruction.

Even in a soft-takeoff case, there would come a point at which humans would be unable to keep up with the pace of AI thinking. (We already see an instance of this with algorithmic stock-trading systems, although human traders are still needed for more complex tasks right now.) The reins of power would have to be transitioned to faster human uploads, trusted AIs built from scratch, or some combination of the two. In a slow scenario, there might be many intelligent systems at comparable levels of performance, maintaining a balance of power, at least for a while. In the long run, a singleton seems plausible because computers -- unlike human kings -- can reprogram their servants to want to obey their bidding, which means that as an agent gains more central authority, it's not likely to later lose it by internal rebellion (only by external aggression). Also, evolutionary competition is not a stable state, while a singleton is. It seems likely that evolution will eventually lead to a singleton at one point or other—whether because one faction takes over the world or because the competing factions form a stable cooperation agreement—and competition won't return after that happens. (That said, if the singleton is merely a contingent cooperation agreement among factions that still disagree, one can imagine that cooperation breaking down in the future....)

Most of humanity's problems are fundamentally coordination problems / selfishness problems. If humans were perfectly altruistic, we could easily eliminate poverty, overpopulation, war, arms races, and other social ills. There would remain "man vs. nature" problems, but these are increasingly disappearing as technology advances. Assuming a digital singleton emerges, the chances of it going extinct seem very small (except due to alien invasions or other external factors) because unless the singleton has a very myopic utility function, it should consider carefully all the consequences of its actions -- in contrast to the "fools rush in" approach that humanity currently takes toward most technological risks, due to wanting the benefits of and profits from technology right away and not wanting to lose out to competitors. For this reason, I suspect that most of George Dvorsky's "12 Ways Humanity Could Destroy The Entire Solar System" are unlikely to happen, since most of them presuppose blundering by an advanced Earth-originating intelligence, but probably by the time Earth-originating intelligence would be able to carry out interplanetary engineering on a nontrivial scale, we'll already have a digital singleton that thoroughly explores the risks of its actions before executing them. That said, this might not be true if competing AIs begin astroengineering before a singleton is completely formed. (By the way, I should point out that I prefer it if the cosmos isn't successfully colonized, because doing so is likely to astronomically multiply sentience and therefore suffering.)

Intelligence explosion?

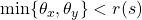

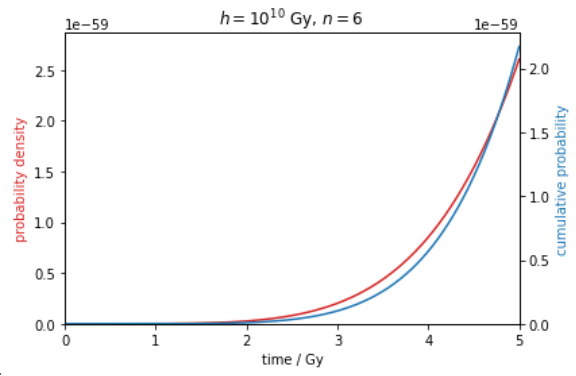

Sometimes it's claimed that we should expect a hard takeoff because AI-development dynamics will fundamentally change once AIs can start improving themselves. One stylized way to explain this is via differential equations. Let I(t) be the intelligence of AIs at time t.

- While humans are building AIs, we have, dI/dt = c, where c is some constant level of human engineering ability. This implies I(t) = ct + constant, a linear growth of I with time.

- In contrast, once AIs can design themselves, we'll have dI/dt = kI for some k. That is, the rate of growth will be faster as the AI designers become more intelligent. This implies I(t) = Aet for some constant A.

Luke Muehlhauser reports that the idea of intelligence explosion once machines can start improving themselves "ran me over like a train. Not because it was absurd, but because it was clearly true." I think this kind of exponential feedback loop is the basis behind many of the intelligence-explosion arguments.

But let's think about this more carefully. What's so special about the point where machines can understand and modify themselves? Certainly understanding your own source code helps you improve yourself. But humans already understand the source code of present-day AIs with an eye toward improving it. Moreover, present-day AIs are vastly simpler than human-level ones will be, and present-day AIs are far less intelligent than the humans who create them. Which is easier: (1) improving the intelligence of something as smart as you, or (2) improving the intelligence of something far dumber? (2) is usually easier. So if anything, AI intelligence should be "exploding" faster now, because it can be lifted up by something vastly smarter than it. Once AIs need to improve themselves, they'll have to pull up on their own bootstraps, without the guidance of an already existing model of far superior intelligence on which to base their designs.

As an analogy, it's harder to produce novel developments if you're the market-leading company; it's easier if you're a competitor trying to catch up, because you know what to aim for and what kinds of designs to reverse-engineer. AI right now is like a competitor trying to catch up to the market leader.

Another way to say this: The constants in the differential equations might be important. Even if human AI-development progress is linear, that progress might be faster than a slow exponential curve until some point far later where the exponential catches up.

In any case, I'm cautious of simple differential equations like these. Why should the rate of intelligence increase be proportional to the intelligence level? Maybe the problems become much harder at some point. Maybe the systems become fiendishly complicated, such that even small improvements take a long time. Robin Hanson echoes this suggestion:

Students get smarter as they learn more, and learn how to learn. However, we teach the most valuable concepts first, and the productivity value of schooling eventually falls off, instead of exploding to infinity. Similarly, the productivity improvement of factory workers typically slows with time, following a power law.

At the world level, average IQ scores have increased dramatically over the last century (the Flynn effect), as the world has learned better ways to think and to teach. Nevertheless, IQs have improved steadily, instead of accelerating. Similarly, for decades computer and communication aids have made engineers much "smarter," without accelerating Moore's law. While engineers got smarter, their design tasks got harder.

Also, ask yourself this question: Why do startups exist? Part of the answer is that they can innovate faster than big companies due to having less institutional baggage and legacy software. It's harder to make radical changes to big systems than small systems. Of course, like the economy does, a self-improving AI could create its own virtual startups to experiment with more radical changes, but just as in the economy, it might take a while to prove new concepts and then transition old systems to the new and better models.

In discussions of intelligence explosion, it's common to approximate AI productivity as scaling linearly with number of machines, but this may or may not be true depending on the degree of parallelizability. Empirical examples for human-engineered projects show diminishing returns with more workers, and while computers may be better able to partition work due to greater uniformity and speed of communication, there will remain some overhead in parallelization. Some tasks may be inherently non-paralellizable, preventing the kinds of ever-faster performance that the most extreme explosion scenarios envisage.

Fred Brooks's "No Silver Bullet" paper argued that "there is no single development, in either technology or management technique, which by itself promises even one order of magnitude improvement within a decade in productivity, in reliability, in simplicity." Likewise, Wirth's law reminds us of how fast software complexity can grow. These points make it seem less plausible that an AI system could rapidly bootstrap itself to superintelligence using just a few key as-yet-undiscovered insights.

Chollet (2017) notes that "even if one part of a system has the ability to recursively self-improve, other parts of the system will inevitably start acting as bottlenecks." We might compare this with Liebig's law of the minimum: "growth is dictated not by total resources available, but by the scarcest resource (limiting factor)." Individual sectors of the human economy can show rapid growth at various times, but the growth rate of the entire economy is more limited.

Eventually there has to be a leveling off of intelligence increase if only due to physical limits. On the other hand, one argument in favor of differential equations is that the economy has fairly consistently followed exponential trends since humans evolved, though the exponential growth rate of today's economy remains small relative to what we typically imagine from an "intelligence explosion".

I think a stronger case for intelligence explosion is the clock-speed difference between biological and digital minds. Even if AI development becomes very slow in subjective years, once AIs take it over, in objective years (i.e., revolutions around the sun), the pace will continue to look blazingly fast. But if enough of society is digital by that point (including human-inspired subroutines and maybe full digital humans), then digital speedup won't give a unique advantage to a single AI project that can then take over the world. Hence, hard takeoff in the sci fi sense still isn't guaranteed. Also, Hanson argues that faster minds would produce a one-time jump in economic output but not necessarily a sustained higher rate of growth.

Another case for intelligence explosion is that intelligence growth might not be driven by the intelligence of a given agent so much as by the collective man-hours (or machine-hours) that would become possible with more resources. I suspect that AI research could accelerate at least 10 times if it had 10-50 times more funding. (This is not the same as saying I want funding increased; in fact, I probably want funding decreased to give society more time to sort through these issues.) The population of digital minds that could be created in a few decades might exceed the biological human population, which would imply faster progress if only by numerosity. Also, the digital minds might not need to sleep, would focus intently on their assigned tasks, etc. However, once again, these are advantages in objective time rather than collective subjective time. And these advantages would not be uniquely available to a single first-mover AI project; any wealthy and technologically sophisticated group that wasn't too far behind the cutting edge could amplify its AI development in this way.

(A few weeks after writing this section, I learned that Ch. 4 of Nick Bostrom's Superintelligence: Paths, Dangers, Strategies contains surprisingly similar content, even up to the use of dI/dt as the symbols in a differential equation. However, Bostrom comes down mostly in favor of the likelihood of an intelligence explosion. I reply to Bostrom's arguments in the next section.)

Reply to Bostrom's arguments for a hard takeoff

Note: Søren Elverlin replies to this section in a video presentation. I agree with some of his points and disagree with others.

In Ch. 4 of Superintelligence, Bostrom suggests several factors that might lead to a hard or at least semi-hard takeoff. I don't fully disagree with his points, and because these are difficult issues, I agree that Bostrom might be right. But I want to play devil's advocate and defend the soft-takeoff view. I've distilled and paraphrased what I think are 6 core arguments, and I reply to each in turn.

#1: There might be a key missing algorithmic insight that allows for dramatic progress.

Maybe, but do we have much precedent for this? As far as I'm aware, all individual AI advances -- and indeed, most technology advances in general -- have not represented astronomical improvements over previous designs. Maybe connectionist AI systems represented a game-changing improvement relative to symbolic AI for messy tasks like vision, but I'm not sure how much of an improvement they represented relative to the best alternative technologies. After all, neural networks are in some sense just fancier forms of pre-existing statistical methods like logistic regression. And even neural networks came in stages, with the perceptron, multi-layer networks, backpropagation, recurrent networks, deep networks, etc. The most groundbreaking machine-learning advances may reduce error rates by a half or something, which may be commercially very important, but this is not many orders of magnitude as hard-takeoff scenarios tend to assume.

Outside of AI, the Internet changed the world, but it was an accumulation of many insights. Facebook has had massive impact, but it too was built from many small parts and grew in importance slowly as its size increased. Microsoft became a virtual monopoly in the 1990s but perhaps more for business than technology reasons, and its power in the software industry at large is probably not growing. Google has a quasi-monopoly on web search, kicked off by the success of PageRank, but most of its improvements have been small and gradual. Google has grown very powerful, but it hasn't maintained a permanent advantage that would allow it to take over the software industry.

Acquiring nuclear weapons might be the closest example of a single discrete step that most dramatically changes a country's position, but this may be an outlier. Maybe other advances in weaponry (arrows, guns, etc.) historically have had somewhat dramatic effects.

Bostrom doesn't present specific arguments for thinking that a few crucial insights may produce radical jumps. He suggests that we might not notice a system's improvements until it passes a threshold, but this seems absurd, because at least the AI developers would need to be intimately acquainted with the AI's performance. While not strictly accurate, there's a slogan: "You can't improve what you can't measure." Maybe the AI's progress wouldn't make world headlines, but the academic/industrial community would be well aware of nontrivial breakthroughs, and the AI developers would live and breathe performance numbers.

#2: Once an AI passes a threshold, it might be able to absorb vastly more content (e.g., by reading the Internet) that was previously inaccessible.

Absent other concurrent improvements I'm doubtful this would produce take-over-the-world superintelligence, because the world's current superintelligence (namely, humanity as a whole) already has read most of the Internet -- indeed, has written it. I guess humans haven't read all automatically generated text or vast streams of numerical data, but the insights gleaned purely from reading such material would be low without doing more sophisticated data mining and learning on top of it, and presumably such data mining would have already been in progress well before Bostrom's hypothetical AI learned how to read.

In any case, I doubt reading with understanding is such an all-or-nothing activity that it can suddenly "turn on" once the AI achieves a certain ability level. As Bostrom says (p. 71), reading with the comprehension of a 10-year-old is probably AI-complete, i.e., requires solving the general AI problem. So assuming that you can switch on reading ability with one improvement is equivalent to assuming that a single insight can produce astronomical gains in AI performance, which we discussed above. If that's not true, and if before the AI system with 10-year-old reading ability was an AI system with a 6-year-old reading ability, why wouldn't that AI have already devoured the Internet? And before that, why wouldn't a proto-reader have devoured a version of the Internet that had been processed to make it easier for a machine to understand? And so on, until we get to the present-day TextRunner system that Bostrom cites, which is already devouring the Internet. It doesn't make sense that massive amounts of content would only be added after lots of improvements. Commercial incentives tend to yield exactly the opposite effect: converting the system to a large-scale product when even modest gains appear, because these may be enough to snatch a market advantage.

The fundamental point is that I don't think there's a crucial set of components to general intelligence that all need to be in place before the whole thing works. It's hard to evolve systems that require all components to be in place at once, which suggests that human general intelligence probably evolved gradually. I expect it's possible to get partial AGI with partial implementations of the components of general intelligence, and the components can gradually be made more general over time. Components that are lacking can be supplemented by human-based computation and narrow-AI hacks until more general solutions are discovered. Compare with minimum viable products and agile software development. As a result, society should be upended by partial AGI innovations many times over the coming decades, well before fully human-level AGI is finished.

#3: Once a system "proves its mettle by attaining human-level intelligence", funding for hardware could multiply.

I agree that funding for AI could multiply manyfold due to a sudden change in popular attention or political dynamics. But I'm thinking of something like a factor of 10 or maybe 50 in an all-out Cold War-style arms race. A factor-of-50 boost in hardware isn't obviously that important. If before there was one human-level AI, there would now be 50. In any case, I expect the Sputnik moment(s) for AI to happen well before it achieves a human level of ability. Companies and militaries aren't stupid enough not to invest massively in an AI with almost-human intelligence.

#4: Once the human level of intelligence is reached, "Researchers may work harder, [and] more researchers may be recruited".

As with hardware above, I would expect these "shit hits the fan" moments to happen before fully human-level AI. In any case:

- It's not clear there would be enough AI specialists to recruit in a short time. Other quantitatively minded people could switch to AI work, but they would presumably need years of experience to produce cutting-edge insights.

- The number of people thinking about AI safety, ethics, and social implications should also multiply during Sputnik moments. So the ratio of AI policy work to total AI work might not change relative to slower takeoffs, even if the physical time scales would compress.

#5: At some point, the AI's self-improvements would dominate those of human engineers, leading to exponential growth.

I discussed this in the "Intelligence explosion?" section above. A main point is that we see many other systems, such as the world economy or Moore's law, that also exhibit positive feedback and hence exponential growth, but these aren't "fooming" at an astounding rate. It's not clear why an AI's self-improvement -- which resembles economic growth and other complex phenomena -- should suddenly explode faster (in subjective time) than humanity's existing recursive-self improvement of its intelligence via digital computation.

On the other hand, maybe the difference between subjective and objective time is important. If a human-level AI could think, say, 10,000 times faster than a human, then assuming linear scaling, it would be worth 10,000 engineers. By the time of human-level AI, I expect there would be far more than 10,000 AI developers on Earth, but given enough hardware, the AI could copy itself manyfold until its subjective time far exceeded that of human experts. The speed and copiability advantages of digital minds seem perhaps the strongest arguments for a takeoff that happens rapidly relative to human observers. Note that, as Hanson said above, this digital speedup might be just a one-time boost, rather than a permanently higher rate of growth, but even the one-time boost could be enough to radically alter the power dynamics of humans vis-à-vis machines. That said, there should be plenty of slightly sub-human AIs by this time, and maybe they could fill some speed gaps on behalf of biological humans.

In general, it's a mistake to imagine human-level AI against a backdrop of our current world. That's like imagining a Tyrannosaurus rex in a human city. Rather, the world will look very different by the time human-level AI arrives. Before AI can exceed human performance in all domains, it will exceed human performance in many narrow domains gradually, and these narrow-domain AIs will help humans respond quickly. For example, a narrow AI that's an expert at military planning based on war games can help humans with possible military responses to rogue AIs.

Many of the intermediate steps on the path to general AI will be commercially useful and thus should diffuse widely in the meanwhile. As user "HungryHobo" noted: "If you had a near human level AI, odds are, everything that could be programmed into it at the start to help it with software development is already going to be part of the suites of tools for helping normal human programmers." Even if AI research becomes nationalized and confidential, its developers should still have access to almost-human-level digital-speed AI tools, which should help smooth the transition. For instance, Bostrom mentions how in the 2010 flash crash (Box 2, p. 17), a high-speed positive-feedback spiral was terminated by a high-speed "circuit breaker". This is already an example where problems happening faster than humans could comprehend them were averted due to solutions happening faster than humans could comprehend them. See also the discussion of "tripwires" in Superintelligence (p. 137).

Conversely, many globally disruptive events may happen well before fully human AI arrives, since even sub-human AI may be prodigiously powerful.

#6: "even when the outside world has a greater total amount of relevant research capability than any one project", the optimization power of the project might be more important than that of the world "since much of the outside world's capability is not be focused on the particular system in question". Hence, the project might take off and leave the world behind. (Box 4, p. 75)

What one makes of this argument depends on how many people are needed to engineer how much progress. The Watson system that played on Jeopardy! required 15 people over ~4(?) years -- given the existing tools of the rest of the world at that time, which had been developed by millions (indeed, billions) of other people. Watson was a much smaller leap forward than that needed to give a general intelligence a take-over-the-world advantage. How many more people would be required to achieve such a radical leap in intelligence? This seems to be a main point of contention in the debate between believers in soft vs. hard takeoff.

How complex is the brain?

Can we get insight into how hard general intelligence is based on neuroscience? Is the human brain fundamentally simple or complex?

One basic algorithm?

Jeff Hawkins, Andrew Ng, and others speculate that the brain may have one fundamental algorithm for intelligence -- deep learning in the cortical column. This idea gains plausibility from the brain's plasticity. For instance, blind people can appropriate the visual cortex for auditory processing. Artificial neural networks can be used to classify any kind of input -- not just visual and auditory but even highly abstract, like features about credit-card fraud or stock prices.

Maybe there's one fundamental algorithm for input classification, but this doesn't imply one algorithm for all that the brain does. Beyond the cortical column, the brain has many specialized structures that seem to perform very specialized functions, such as reward learning in the basal ganglia, fear processing in the amygdala, etc. Of course, it's not clear how essential all of these parts are or how easy it would be to replace them with artificial components performing the same basic functions.

One argument for faster AGI takeoffs is that humans have been able to learn many sophisticated things (e.g., advanced mathematics, music, writing, programming) without requiring any genetic changes. And what we now know doesn't seem to represent any kind of limit to what we could know with more learning. The human collection of cognitive algorithms is very flexible, which seems to belie claims that all intelligence requires specialized designs. On the other hand, even if human genes haven't changed much in the last 10,000 years, human culture has evolved substantially, and culture undergoes slow trial-and-error evolution in similar ways as genes do. So one could argue that human intellectual achievements are not fully general but rely on a vast amount of specialized, evolved content. Just as a single random human isolated from society probably couldn't develop general relativity on his own in a lifetime, so a single random human-level AGI probably couldn't either. Culture is the new genome, and it progresses slowly.

Moreover, some scholars believe that certain human abilities, such as language, are very essentially based on genetic hard-wiring:

The approach taken by Chomsky and Marr toward understanding how our minds achieve what they do is as different as can be from behaviorism. The emphasis here is on the internal structure of the system that enables it to perform a task, rather than on external association between past behavior of the system and the environment. The goal is to dig into the "black box" that drives the system and describe its inner workings, much like how a computer scientist would explain how a cleverly designed piece of software works and how it can be executed on a desktop computer.

Chomsky himself notes:

There's a fairly recent book by a very good cognitive neuroscientist, Randy Gallistel and King, arguing -- in my view, plausibly -- that neuroscience developed kind of enthralled to associationism and related views of the way humans and animals work. And as a result they've been looking for things that have the properties of associationist psychology.

[...] Gallistel has been arguing for years that if you want to study the brain properly you should begin, kind of like Marr, by asking what tasks is it performing. So he's mostly interested in insects. So if you want to study, say, the neurology of an ant, you ask what does the ant do? It turns out the ants do pretty complicated things, like path integration, for example. If you look at bees, bee navigation involves quite complicated computations, involving position of the sun, and so on and so forth. But in general what he argues is that if you take a look at animal cognition, human too, it is computational systems.

Many parts of the human body, like the digestive system or bones/muscles, are extremely complex and fine-tuned, yet few people argue that their development is controlled by learning. So it's not implausible that a lot of the brain's basic architecture could be similarly hard-coded.

Typically AGI researchers express scorn for manually tuned software algorithms that don't rely on fully general learning. But Chomsky's stance challenges that sentiment. If Chomsky is right, then a good portion of human "general intelligence" is finely tuned, hard-coded software of the sort that we see in non-AI branches of software engineering. And this view would suggest a slower AGI takeoff because time and experimentation are required to tune all the detailed, specific algorithms of intelligence.

Ontogenetic development

A full-fledged superintelligence probably requires very complex design, but it may be possible to build a "seed AI" that would recursively self-improve toward superintelligence. Alan Turing proposed this in his 1950 "Computing machinery and intelligence":

Instead of trying to produce a programme to simulate the adult mind, why not rather try to produce one which simulates the child's? If this were then subjected to an appropriate course of education one would obtain the adult brain. Presumably the child brain is something like a notebook as one buys it from the stationer's. Rather little mechanism, and lots of blank sheets. (Mechanism and writing are from our point of view almost synonymous.) Our hope is that there is so little mechanism in the child brain that something like it can be easily programmed.

Animal development appears to be at least somewhat robust based on the fact that the growing organisms are often functional despite a few genetic mutations and variations in prenatal and postnatal environments. Such variations may indeed make an impact -- e.g., healthier development conditions tend to yield more physically attractive adults -- but most humans mature successfully over a wide range of input conditions.

On the other hand, an argument against the simplicity of development is the immense complexity of our DNA. It accumulated over billions of years through vast numbers of evolutionary "experiments". It's not clear that human engineers could perform enough measurements to tune ontogenetic parameters of a seed AI in a short period of time. And even if the parameter settings worked for early development, they would probably fail for later development. Rather than a seed AI developing into an "adult" all at once, designers would develop the AI in small steps, since each next stage of development would require significant tuning to get right.

Think about how much effort is required for human engineers to build even relatively simple systems. For example, I think the number of developers who work on Microsoft Office is in the thousands. Microsoft Office is complex but is still far simpler than a mammalian brain. Brains have lots of little parts that have been fine-tuned. That kind of complexity requires immense work by software developers to create. The main counterargument is that there may be a simple meta-algorithm that would allow an AI to bootstrap to the point where it could fine-tune all the details on its own, without requiring human inputs. This might be the case, but my guess is that any elegant solution would be hugely expensive computationally. For instance, biological evolution was able to fine-tune the human brain, but it did so with immense amounts of computing power over millions of years.

Brain quantity vs. quality

A common analogy for the gulf between superintelligence vs. humans is that between humans vs. chimpanzees. In Consciousness Explained, Daniel Dennett mentions (pp. 189-190) how our hominid ancestors had brains roughly four times the volume as those of chimps but roughly the same in structure. This might incline one to imagine that brain size alone could yield superintelligence. Maybe we'd just need to quadruple human brains once again to produce superintelligent humans? If so, wouldn't this imply a hard takeoff, since quadrupling hardware is relatively easy?

But in fact, as Dennett explains, the quadrupling of brain size from chimps to pre-humans completed before the advent of language, cooking, agriculture, etc. In other words, the main "foom" of humans came from culture rather than brain size per se -- from software in addition to hardware. Yudkowsky seems to agree: "Humans have around four times the brain volume of chimpanzees, but the difference between us is probably mostly brain-level cognitive algorithms."

But cultural changes (software) arguably progress a lot more slowly than hardware. The intelligence of human society has grown exponentially, but it's a slow exponential, and rarely have there been innovations that allowed one group to quickly overpower everyone else within the same region of the world. (Between isolated regions of the world the situation was sometimes different -- e.g., Europeans with Maxim guns overpowering Africans because of very different levels of industrialization.)

More impact in hard-takeoff scenarios?

Some, including Owen Cotton-Barratt and Toby Ord, have argued that even if we think soft takeoffs are more likely, there may be higher value in focusing on hard-takeoff scenarios because these are the cases in which society would have the least forewarning and the fewest people working on AI altruism issues. This is a reasonable point, but I would add that

- Maybe hard takeoffs are sufficiently improbable that focusing on them still doesn't have highest priority. (Of course, some exploration of fringe scenarios is worthwhile.) There may be important advantages to starting early in shaping how society approaches soft takeoffs, and if a soft takeoff is very likely, those efforts may have more expected impact.

- Thinking about the most likely AI outcomes rather than the most impactful outcomes also gives us a better platform on which to contemplate other levers for shaping the future, such as non-AI emerging technologies, international relations, governance structures, values, etc. Focusing on a tail AI scenario doesn't inform non-AI work very well because that scenario probably won't happen. Promoting antispeciesism matters whether there's a hard or soft takeoff (indeed, maybe more in the soft-takeoff case), so our model of how the future will unfold should generally focus on likely scenarios. Plus, even if we do ultimately choose to focus on a Pascalian low-probability-but-high-impact scenario, learning more about the most likely future outcomes can better position us to find superior (more likely and/or more important) Pascalian wagers that we haven't thought of yet. Edifices of understanding are not built on Pascalian wagers.

- As a more general point about expected-value calculations, I think improving one's models of the world (i.e., one's probabilities) is generally more important than improving one's estimates of the values of outcomes conditional on them occurring. Why? Our current frameworks for envisioning the future may be very misguided, and estimates of "values of outcomes" may become obsolete if our conception of what outcomes will even happen changes radically. It's more important to make crucial insights that will shatter our current assumptions and get us closer to truth than it is to refine value estimates within our current, naive world models. As an example, philosophers in the Middle Ages would have accomplished little if they had asked what God-glorifying actions to focus on by evaluating which devout obeisances would have the greatest upside value if successful. Such philosophers would have accomplished more if they had explored whether a God even existed. Of course, sometimes debates on factual questions are stalled, and perhaps there may be lower-hanging fruit in evaluating the prudential implications of different scenarios ("values of outcomes") until further epistemic progress can be made on the probabilities of outcomes. (Thanks to a friend for inspiring this point.)

In any case, the hard-soft distinction is not binary, and maybe the best place to focus is on scenarios where human-level AI takes over on a time scale of a few years. (Timescales of months, days, or hours strike me as pretty improbable, unless, say, Skynet gets control of nuclear weapons.)

In Superintelligence, Nick Bostrom suggests (Ch. 4, p. 64) that "Most preparations undertaken before onset of [a] slow takeoff would be rendered obsolete as better solutions would gradually become visible in the light of the dawning era." Toby Ord uses the term "nearsightedness" to refer to the ways in which research too far in advance of an issue's emergence may not as useful as research when more is known about the issue. Ord contrasts this with benefits of starting early, including course-setting. I think Ord's counterpoints argue against the contention that early work wouldn't matter that much in a slow takeoff. Some of how society responded to AI surpassing human intelligence might depend on early frameworks and memes. (For instance, consider the lingering impact of Terminator imagery on almost any present-day popular-media discussion of AI risk.) Some fundamental work would probably not be overthrown by later discoveries; for instance, algorithmic-complexity bounds of key algorithms were discovered decades ago but will remain relevant until intelligence dies out, possibly billions of years from now. Some non-technical policy and philosophy work would be less obsoleted by changing developments. And some AI preparation would be relevant both in the short term and the long term. Slow AI takeoff to reach the human level is already happening, and more minds should be exploring these questions well in advance.

Making a related though slightly different point, Bostrom argues in Superintelligence (Ch. 5, pp. 85-86) that individuals might play more of a role in cases where elites and governments underestimate the significance of AI: "Activists seeking maximum expected impact may therefore wish to focus most of their planning on [scenarios where governments come late to the game], even if they believe that scenarios in which big players end up calling all the shots are more probable." Again I would qualify this with the note that we shouldn't confuse "acting as if" governments will come late with believing they actually will come late when thinking about most likely future scenarios.

Even if one does wish to bet on low-probability, high-impact scenarios of fast takeoff and governmental neglect, this doesn't speak to whether or how we should push on takeoff speed and governmental attention themselves. Following are a few considerations.

Takeoff speed

- In favor of fast takeoff:

- A singleton is more likely, thereby averting possibly disastrous conflict among AIs.

- If one prefers uncontrolled AI, fast takeoffs seem more likely to produce them.

- In favor of slow takeoff:

- More time for many parties to participate in shaping the process, compromising, and developing less damaging pathways to AI takeoff.

- If one prefers controlled AI, slow takeoffs seem more likely to produce them in general. (There are some exceptions. For instance, fast takeoff of an AI built by a very careful group might remain more controlled than an AI built by committees and messy politics.)

Amount of government/popular attention to AI

- In favor of more:

- Would yield much more reflection, discussion, negotiation, and pluralistic representation.

- If one favors controlled AI, it's plausible that multiplying the number of people thinking about AI would multiply consideration of failure modes.

- Public pressure might help curb arms races, in analogy with public opposition to nuclear arms races.

- In favor of less:

- Wider attention to AI might accelerate arms races rather than inducing cooperation on more circumspect planning.

- The public might freak out and demand counterproductive measures in response to the threat.

- If one prefers uncontrolled AI, that outcome may be less likely with many more human eyes scrutinizing the issue.

Village idiot vs. Einstein

One of the strongest arguments for hard takeoff is this one by Yudkowsky:

the distance from "village idiot" to "Einstein" is tiny, in the space of brain designs

Or as Scott Alexander put it:

It took evolution twenty million years to go from cows with sharp horns to hominids with sharp spears; it took only a few tens of thousands of years to go from hominids with sharp spears to moderns with nuclear weapons.

I think we shouldn't take relative evolutionary timelines at face value, because most of the previous 20 million years of mammalian evolution weren't focused on improving human intelligence; most of the evolutionary selection pressure was directed toward optimizing other traits. In contrast, cultural evolution places greater emphasis on intelligence because that trait is more important in human society than it is in most animal fitness landscapes.

Still, the overall point is important: The tweaks to a brain needed to produce human-level intelligence may not be huge compared with the designs needed to produce chimp intelligence, but the differences in the behaviors of the two systems, when placed in a sufficiently information-rich environment, are huge.

Nonetheless, I incline toward thinking that the transition from human-level AI to an AI significantly smarter than all of humanity combined would be somewhat gradual (requiring at least years if not decades) because the absolute scale of improvements needed would still be immense and would be limited by hardware capacity. But if hardware becomes many orders of magnitude more efficient than it is today, then things could indeed move more rapidly.

Another important criticism of the "village idiot" point is that it lacks context. While a village idiot in isolation will not produce rapid progress toward superintelligence, one Einstein plus a million village idiots working for him can produce AI progress much faster than one Einstein alone. The narrow-intelligence software tools that we build are dumber than village idiots in isolation, but collectively, when deployed in thoughtful ways by smart humans, they allow humans to achieve much more than Einstein by himself with only pencil and paper. This observation weakens the idea of a phase transition when human-level AI is developed, because village-idiot-level AIs in the hands of humans will already be achieving "superhuman" levels of performance. If we think of human intelligence as the number 1 and human-level AI that can build smarter AI as the number 2, then rather than imagining a transition from 1 to 2 at one crucial point, we should think of our "dumb" software tools as taking us to 1.1, then 1.2, then 1.3, and so on. (My thinking on this point was inspired by Ramez Naam.)

AI performance in games vs. the real world

Many of the most impressive AI achievements of the 2010s were improvements at game play, both video games like Atari games and board/card games like Go and poker. Some people infer from these accomplishments that AGI may not be far off. I think performance in these simple games doesn't give much evidence that a world-conquering AGI could arise within a decade or two.

A main reason is that most of the games at which AI has excelled have had simple rules and a limited set of possible actions at each turn. Russell and Norvig (2003), pp. 161-62: "For AI researchers, the abstract nature of games makes them an appealing subject for study. The state of a game is easy to represent, and agents are usually restricted to a small number of actions whose outcomes are defined by precise rules." In games like Space Invaders or Go, you can see the entire world at once and represent it as a two-dimensional grid. You can also consider all possible actions at a given turn. For example, AlphaGo's "policy networks" gave "a probability value for each possible legal move (i.e. the output of the network is as large as the board)" (as summarized by Burger 2016). Likewise, DeepMind's deep Q-network for playing Atari games had "a single output for each valid action" (Mnih et al. 2015, p. 530).

In contrast, the state space of the world is enormous, heterogeneous, not easily measured, and not easily represented in a simple two-dimensional grid. Plus, the number of possible actions that one can take at any given moment is almost unlimited; for instance, even just considering actions of the form "print to the screen a string of uppercase or lowercase alphabetical characters fewer than 50 characters long", the number of possibilities for what text to print out is larger than the number of atoms in the observable universe. These problems seem to require hierarchical world models and hierarchical planning of actions—allowing for abstraction of complexity into simplified and high-level conceptualizations—as well as the data structures, learning algorithms, and simulation capabilities on which such world models and plans can be based.

Some people may be impressed that AlphaGo uses "intuition" (i.e., deep neural networks), like human players do, and doesn't rely purely on brute-force search and hand-crafted heuristic evaluation functions the way that Deep Blue did to win at chess. But the idea that computers can have "intuition" is nothing new, since that's what most machine-learning classifiers are about.

Machine learning, especially supervised machine learning, is very popular these days compared against other aspects of AI. Perhaps this is because unlike most other parts of AI, machine learning can easily be commercialized? But even if visual, auditory, and other sensory recognition can be replicated by machine learning, this doesn't get us to AGI. In my opinion, the hard part of AGI (or at least, the part we haven't made as much progress on) is how to hook together various narrow-AI modules and abilities into a more generally intelligent agent that can figure out what abilities to deploy in various contexts in pursuit of higher-level goals. Hierarchical planning in complex worlds, rich semantic networks, and general "common sense" in various flavors still seem largely absent from many state-of-the-art AI systems as far as I can tell. I don't think these are problems that you can just bypass by scaling up deep reinforcement learning or something.

Kaufman (2017a) says regarding a conversation with professor Bryce Wiedenbeck: "Bryce thinks there are deep questions about what intelligence really is that we don't understand yet, and that as we make progress on those questions we'll develop very different sorts of [machine-learning] systems. If something like today's deep learning is still a part of what we eventually end up with, it's more likely to be something that solves specific problems than as a critical component." Personally, I think deep learning (or something functionally analogous to it) is likely to remain a big component of future AI systems. Two lines of evidence for this view are that (1) supervised machine learning has been a cornerstone of AI for decades and (2) animal brains, including the human cortex, seem to rely crucially on something like deep learning for sensory processing. However, I agree with Bryce that there remain big parts of human intelligence that aren't captured by even a scaled up version of deep learning.

I also largely agree with Michael Littman's expectations as described by Kaufman (2017b): "I asked him what he thought of the idea that to we could get AGI with current techniques, primarily deep neural nets and reinforcement learning, without learning anything new about how intelligence works or how to implement it [...]. He didn't think this was possible, and believes there are deep conceptual issues we still need to get a handle on."

Merritt (2017) quotes Stuart Russell as saying that modern neural nets "lack the expressive power of programming languages and declarative semantics that make database systems, logic programming, and knowledge systems useful." Russell believes "We have at least half a dozen major breakthroughs to come before we get [to AI]".

Replies to Yudkowsky on "local capability gain"

Yudkowsky (2016a) discusses some interesting insights from AlphaGo's matches against Lee Sedol and DeepMind more generally. He says:

AlphaGo’s core is built around a similar machine learning technology to DeepMind’s Atari-playing system – the single, untweaked program that was able to learn superhuman play on dozens of different Atari games just by looking at the pixels, without specialization for each particular game. In the Atari case, we didn’t see a bunch of different companies producing gameplayers for all the different varieties of game. The Atari case was an example of an event that Robin Hanson called “architecture” and doubted, and that I called “insight.” Because of their big architectural insight, DeepMind didn’t need to bring in lots of different human experts at all the different Atari games to train their universal Atari player. DeepMind just tossed all pre-existing expertise because it wasn’t formatted in a way their insightful AI system could absorb, and besides, it was a lot easier to just recreate all the expertise from scratch using their universal Atari-learning architecture.

I agree with Yudkowsky that there are domains where a new general tool renders previous specialized tools obsolete all at once. However:

- There wasn't intense pressure to perform well on most Atari games before DeepMind tried. Specialized programs can indeed perform well on such games if one cares to develop them. For example, DeepMind's 2015 Atari player actually performed below human level on Ms. Pac-Man (Mnih et al. 2015, Figure 3), but in 2017, Microsoft AI researchers beat Ms. Pac-Man by optimizing harder for just that one game.

- While DeepMind's Atari player is certainly more general in its intelligence than most other AI game-playing programs, its abilities are still quite limited. For example, DeepMind had 0% performance on Montezuma's Revenge (Mnih et al. 2015, Figure 3). This was later improved upon by adding "curiosity" to encourage exploration. But that's an example of the view that AI progress generally proceeds by small tweaks.

Yudkowsky (2016a) continues:

so far as I know, AlphaGo wasn’t built in collaboration with any of the commercial companies that built their own Go-playing programs for sale. The October architecture was simple and, so far as I know, incorporated very little in the way of all the particular tweaks that had built up the power of the best open-source Go programs of the time. Judging by the October architecture, after their big architectural insight, DeepMind mostly started over in the details (though they did reuse the widely known core insight of Monte Carlo Tree Search). DeepMind didn’t need to trade with any other Go companies or be part of an economy that traded polished cognitive modules, because DeepMind’s big insight let them leapfrog over all the detail work of their competitors.

This is a good point, but I think it's mainly a function of the limited complexity of the Go problem. With the exception of learning from human play, AlphaGo didn't require massive inputs of messy, real-world data to succeed, because its world was so simple. Go is the kind of problem where we would expect a single system to be able to perform well without trading for cognitive assistance. Real-world problems are more likely to depend upon external AI systems—e.g., when doing a web search for information. No simple AI system that runs on just a few machines will reproduce the massive data or extensively fine-tuned algorithms of Google search. For the foreseeable future, Google search will always be an external "polished cognitive module" that needs to be "traded for" (although Google search is free for limited numbers of queries). The same is true for many other cloud services, especially those reliant upon huge amounts of data or specialized domain knowledge. We see lots of specialization and trading of non-AI cognitive modules, such as hardware components, software applications, Amazon Web Services, etc. And of course, simple AIs will for a long time depend upon the human economy to provide material goods and services, including electricity, cooling, buildings, security guards, national defense, etc.

A case for epistemic modesty on AI timelines

Estimating how long a software project will take to complete is notoriously difficult. Even if I've completed many similar coding tasks before, when I'm asked to estimate the time to complete a new coding project, my estimate is often wrong by a factor of 2 and sometimes wrong by a factor of 4, or even 10. Insofar as the development of AGI (or other big technologies, like nuclear fusion) is a big software (or more generally, engineering) project, it's unsurprising that we'd see similarly dramatic failures of estimation on timelines for these bigger-scale achievements.

A corollary is that we should maintain some modesty about AGI timelines and takeoff speeds. If, say, 100 years is your median estimate for the time until some agreed-upon form of AGI, then there's a reasonable chance you'll be off by a factor of 2 (suggesting AGI within 50 to 200 years), and you might even be off by a factor of 4 (suggesting AGI within 25 to 400 years). Similar modesty applies for estimates of takeoff speed from human-level AGI to super-human AGI, although I think we can largely rule out extreme takeoff speeds (like achieving performance far beyond human abilities within hours or days) based on fundamental reasoning about the computational complexity of what's required to achieve superintelligence.

My bias is generally to assume that a given technology will take longer to develop than what you hear about in the media, (a) because of the planning fallacy and (b) because those who make more audacious claims are more interesting to report about. Believers in "the singularity" are not necessarily wrong about what's technically possible in the long term (though sometimes they are), but the reason enthusiastic singularitarians are considered "crazy" by more mainstream observers is that singularitarians expect change much faster than is realistic. AI turned out to be much harder than the Dartmouth Conference participants expected. Likewise, nanotech is progressing slower and more incrementally than the starry-eyed proponents predicted.

Intelligent robots in your backyard

Many nature-lovers are charmed by the behavior of animals but find computers and robots to be cold and mechanical. Conversely, some computer enthusiasts may find biology to be soft and boring compared with digital creations. However, the two domains share a surprising amount of overlap. Ideas of optimal control, locomotion kinematics, visual processing, system regulation, foraging behavior, planning, reinforcement learning, etc. have been fruitfully shared between biology and robotics. Neuroscientists sometimes look to the latest developments in AI to guide their theoretical models, and AI researchers are often inspired by neuroscience, such as with neural networks and in deciding what cognitive functionality to implement.

I think it's helpful to see animals as being intelligent robots. Organic life has a wide diversity, from unicellular organisms through humans and potentially beyond, and so too can robotic life. The rigid conceptual boundary that many people maintain between "life" and "machines" is not warranted by the underlying science of how the two types of systems work. Different types of intelligence may sometimes converge on the same basic kinds of cognitive operations, and especially from a functional perspective -- when we look at what the systems can do rather than how they do it -- it seems to me intuitive that human-level robots would deserve human-level treatment, even if their underlying algorithms were quite dissimilar.

Whether robot algorithms will in fact be dissimilar from those in human brains depends on how much biological inspiration the designers employ and how convergent human-type mind design is for being able to perform robotic tasks in a computationally efficient manner. Some classical robotics algorithms rely mostly on mathematical problem definition and optimization; other modern robotics approaches use biologically plausible reinforcement learning and/or evolutionary selection. (In one YouTube video about robotics, I saw that someone had written a comment to the effect that "This shows that life needs an intelligent designer to be created." The irony is that some of the best robotics techniques use evolutionary algorithms. Of course, there are theists who say God used evolution but intervened at a few points, and that would be an apt description of evolutionary robotics.)

The distinction between AI and AGI is somewhat misleading, because it may incline one to believe that general intelligence is somehow qualitatively different from simpler AI. In fact, there's no sharp distinction; there are just different machines whose abilities have different degrees of generality. A critic of this claim might reply that bacteria would never have invented calculus. My response is as follows. Most people couldn't have invented calculus from scratch either, but over a long enough period of time, eventually the collection of humans produced enough cultural knowledge to make the development possible. Likewise, if you put bacteria on a planet long enough, they too may develop calculus, by first evolving into more intelligent animals who can then go on to do mathematics. The difference here is a matter of degree: The simpler machines that bacteria are take vastly longer to accomplish a given complex task.

Just as Earth's history saw a plethora of animal designs before the advent of humans, so I expect a wide assortment of animal-like (and plant-like) robots to emerge in the coming decades well before human-level AI. Indeed, we've already had basic robots for many decades (or arguably even millennia). These will grow gradually more sophisticated, and as we converge on robots with the intelligence of birds and mammals, AI and robotics will become dinner-table conversation topics. Of course, I don't expect the robots to have the same sets of skills as existing animals. Deep Blue had chess-playing abilities beyond any animal, while in other domains it was less efficacious than a blade of grass. Robots can mix and match cognitive and motor abilities without strict regard for the order in which evolution created them.