Birds, Brains, Planes, and AI: Against Appeals to the Complexity / Mysteriousness / Efficiency of the Brain

[Epistemic status: Strong opinions lightly held, this time with a cool graph.]

I argue that an entire class of common arguments against short timelines is bogus, and provide weak evidence that anchoring to the human-brain-human-lifetime milestone is reasonable.

In a sentence, my argument is that the complexity and mysteriousness and efficiency of the human brain (compared to artificial neural nets) is almost zero evidence that building TAI will be difficult, because evolution typically makes things complex and mysterious and efficient, even when there are simple, easily understood, inefficient designs that work almost as well (or even better!) for human purposes.

In slogan form: If all we had to do to get TAI was make a simple neural net 10x the size of my brain, my brain would still look the way it does.

The case of birds & planes illustrates this point nicely. Moreover, it is also a precedent for several other short-timelines talking points, such as the human-brain-human-lifetime (HBHL) anchor.

Plan:

- Illustrative Analogy

- Exciting Graph

- Analysis

- Extra brute force can make the problem a lot easier

- Evolution produces complex mysterious efficient designs by default, even when simple inefficient designs work just fine for human purposes.

- What’s bogus and what’s not

- Example: Data-efficiency

- Conclusion

- Appendix

1909 French military plane, the Antionette VII.

By Deep silence (Mikaël Restoux) - Own work (Bourget museum, in France), CC BY 2.5, https://commons.wikimedia.org/w/index.php?curid=1615429

Illustrative Analogy

|

AI timelines, from our current perspective |

Flying machine timelines, from the perspective of the late 1800’s: |

|

Shorty: Human brains are giant neural nets. This is reason to think we can make human-level AGI (or at least AI with strategically relevant skills, like politics and science) by making giant neural nets. |

Shorty: Birds are winged creatures that paddle through the air. This is reason to think we can make winged machines that paddle through the air. |

|

Longs: Whoa whoa, there are loads of important differences between brains and artificial neural nets: [what follows is a direct quote from the objection a friend raised when reading an early draft of this post!]

|

Longs: Whoa whoa, there are loads of important differences between birds and flying machines:

|

|

Shorty: The key variables seem to be size and training time. Current neural nets are tiny; the biggest one is only one-thousandth the size of the human brain. But they are rapidly getting bigger. Once we have enough compute to train neural nets as big as the human brain for as long as a human lifetime (HBHL), it should in principle be possible for us to build HLAGI. No doubt there will be lots of details to work out, of course. But that shouldn’t take more than a few years. |

Shorty: Once the power-to-weight ratio of our motors surpasses the power-to-weight ratio of bird muscles, it should be in principle possible for us to build a flying machine. No doubt there will be lots of details to work out, of course. But that shouldn’t take more than a few years. |

|

Longs: Bah! I don’t think we know what the key variables are. For example, biological brains seem to be able to learn faster, with less data, than artificial neural nets. And we don’t know why. Besides, “there will be lots of details to work out” is a huge understatement. It took evolution billions of generations of billions of individuals to produce humans. What makes you think we’ll be able to do it quickly? It’s plausible that actually we’ll have to do it the way evolution did it, i.e. meta-learn, i.e. evolve a large population of HBHLs, over many generations. (Or, similarly, train a neural net with a big batch size and a horizon length of a lifetime). And even if you think we’ll be able to do it substantially quicker than evolution did, it’s pretty presumptuous to think we could do it quickly enough that the HBHL milestone is relevant for forecasting. |

Longs: Bah! I don’t think we know what the key variables are. For example, birds seem to be able to soar long distances without flapping their wings at all, and we still haven’t figured out how they do it. Another example: We still don’t know how birds manage to steer through the air without crashing (flight stability & control). Besides, “there will be lots of details to work out” is a huge understatement. It took evolution billions of generations of billions of individuals to produce birds. What makes you think we’ll be able to do it quickly? It’s plausible that actually we’ll have to do it the way evolution did it, i.e. meta-design, i.e. evolve a large population of flying machines, tweaking our blueprints each generation of crashed machines to grope towards better designs. And even if you think we’ll be able to do it substantially quicker than evolution did, it’s pretty presumptuous to think we could do it quickly enough that the date our engines achieve power/weight parity with bird muscle is relevant for forecasting. |

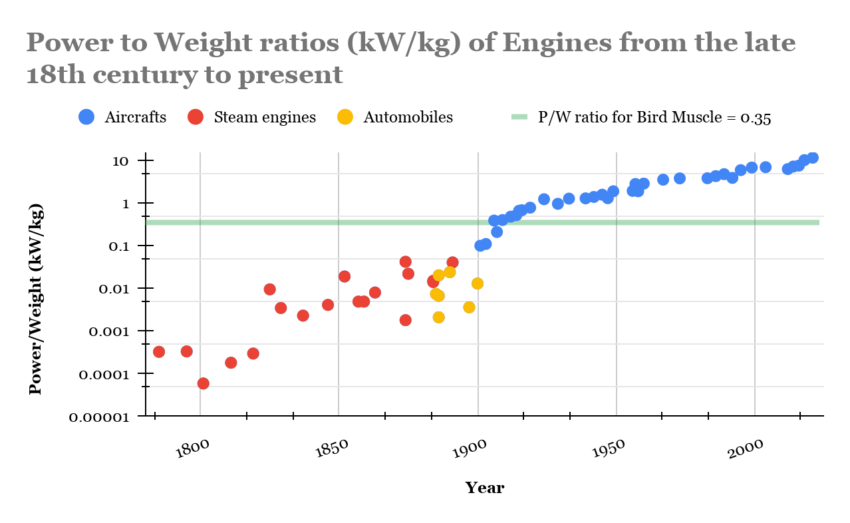

Exciting Graph

This data shows that Shorty was entirely correct about forecasting heavier-than-air flight. (For details about the data, see appendix.) Whether Shorty will also be correct about forecasting TAI remains to be seen.

In some sense, Shorty has already made two successful predictions: I started writing this argument before having any of this data; I just had an intuition that power-to-weight is the key variable for flight and that therefore we probably got flying machines shortly after having comparable power-to-weight as bird muscle. Halfway through the first draft, I googled and confirmed that yes, the Wright Flyer’s motor was close to bird muscle in power-to-weight. Then, while writing the second draft, I hired an RA, Amogh Nanjajjar, to collect more data and build this graph. As expected, there was a trend of power-to-weight improving over time, with flight happening right around the time bird-muscle parity was reached.

I had previously heard from a friend, who read a book about the invention of flight, that the Wright brothers were the first because they (a) studied birds and learned some insights from them, and (b) did a bunch of trial and error, rapid iteration, etc. (e.g. in wind tunnels). The story I heard was all about the importance of insight and experimentation--but this graph seems to show that the key constraint was engine power-to-weight. Insight and experimentation were important for determining who invented flight, but not for determining which decade flight was invented in.

Analysis

Part 1: Extra brute force can make the problem a lot easier

One way in which compute can substitute for insights/algorithms/architectures/ideas is that you can use compute to search for them. But there is a different and arguably more important way in which compute can substitute for insights/etc.: Scaling up the key variables, so that the problem becomes easier, so that fewer insights/etc. are needed.

For example, with flight, the problem becomes easier the more power/weight ratio your motors have. Even if the Wright brothers didn’t exist and nobody else had their insights, eventually we would have achieved powered flight anyway, because when our engines are 100x more powerful for the same weight, we can use extremely simple, inefficient designs. (For example, imagine a u-shaped craft with a low center of gravity and helicopter-style rotors on each tip. Add a third, smaller propeller on a turret somewhere for steering.)

With neural nets, we have plenty of evidence now that bigger = better, with theory to back it up. Suppose the problem of making human-level AGI with HBHL levels of compute is really difficult. OK, 10x the parameter count and 10x the training time and try again. Still too hard? Repeat.

Note that I’m not saying that if you take a particular design that doesn’t work, and make it bigger, it’ll start working. (If you took Da Vinci’s flying machine and made the engine 100x more powerful, it would not work). Rather, I’m saying that the problem of finding a design that works gets qualitatively easier the more parameters and training time you have to work with.

Finally, remember that human-level AGI is not the only kind of TAI. Sufficiently powerful R&D tools would work, as would sufficiently powerful persuasion tools, as might something that is agenty and inferior to humans in some ways but vastly superior in others.

Part 2: Evolution produces complex mysterious efficient designs by default, even when simple inefficient designs work just fine for human purposes.

Suppose that actually all we have to do to get TAI is something fairly simple and obvious, but with a neural net 10x the size of my (actual) brain and trained for 10x longer. In this world, does the human brain look any different than it does in the actual world?

No. Here is a nonexhaustive list of reasons why evolution would evolve human brains to look like they do, with all their complexity and mysteriousness and efficiency, even if the same capability levels could be reached with 10x more neurons and a very simple architecture. Feel free to skip ahead if you think this is obvious.

- In general, evolved creatures are complex and mysterious to us, even when simple and human-comprehensible architectures work fine. Take birds, for example: As mentioned before, all the way up to the Wright brothers there were a lot of very basic things about birds that were still not understood. From this article: “They watched buzzards glide from horizon to horizon without moving their wings, and guessed they must be sucking some mysterious essence of upness from the air. Few seemed to realize that air moves up and down as well as horizontally.” I don’t know much about ornithology but I’d be willing to bet that there were lots of important things discovered about birds after airplanes already existed, and that there are still at least a few remaining mysteries about how birds fly. (Spot check: Yep, the history of ornithopters page says “...the development of comprehensive aerodynamic theory for flapping remains an outstanding problem...”). And of course evolved creatures are often more efficient in various ways than their still-useful engineered counterparts.

- Making the brain 10x bigger would be enormously costly to fitness, because it would cost 10x more energy and restrict mobility (not to mention the difficulties of getting through the birth canal!) Much better to come up with clever modules, instincts, optimizations, etc. that achieve the same capabilities in a smaller brain.

- Evolution is heavily constrained on training data, perhaps even more than on brain size. It can’t just evolve the organism to have 10x more training data, because longer-lived organisms have more opportunities to be eaten or suffer accidents, especially in their 10x-longer childhoods. Far better to hard-code some behaviors as instincts.

- Evolution gets clever optimizations and modules and such “for free” in some sense. Since it is evolving millions of individuals for millions of generations anyway, it’s not a big deal for it to perform massive search and gradient descent through architecture-space.

- Completely blank slate brains (i.e. extremely simple architecture, no instincts or finely tuned priors) would be unfit even if they were highly capable because they wouldn’t be aligned to evolution’s values (i.e. reproduction.) Perhaps most of the complexity in the human brain--the instincts, inbuilt priors, and even most of the modules--isn’t for capabilities at all, but rather for alignment.

Part 3: What’s bogus and what’s not

The general pattern of argument I think is bogus is:

The brain has property X, which seems to be important to how it functions. We don’t know how to make AI’s with property X. It took evolution a long time to make brains have property X. This is reason to think TAI is not near.

As argued above, if TAI is near, there should still be many X which are important to how the brain functions, which we don’t know how to reproduce in AI, and which it took evolution a long time to produce. So rattling off a bunch of X’s is basically zero evidence against TAI being near.

Put differently, here are two objections any particular argument of this type needs to overcome:

- TAI does not actually require X (analogous to how airplanes didn’t require anywhere near the energy-efficiency of birds, nor the ability to soar, nor the ability to flap their wings, nor the ability to take off from unimproved surfaces… the list goes on)

- We’ll figure out how to get property X in AIs soon after we have the other key properties (size and training time), because (a) we can do search, like evolution did but much more efficient, (b) we can increase the other key variables to make our design/search problem easier, and (c) we can use human ingenuity & biological inspiration. Historically there is plenty of precedent for the previous three factors being strong enough; see e.g. the case of powered flight.

This reveals how the arguments could be reformulated to become non-bogus! They need to argue (a) that X is probably necessary for TAI, and (b) that X isn’t something that we’ll figure out fairly quickly once the key variables of size and training time are surpassed.

In some cases there are decent arguments to be made for both (a) and (b). I think efficiency is one of them, so I’ll use that as my example below.

Part 4: Example: Data-efficiency

Let’s work through the example of data-efficiency. A bad version of this argument would be:

Humans are much more data-efficient learners than current AI systems. Data-efficiency is very important; any human who learned as inefficiently as current AI would basically be mentally disabled. This is reason to think TAI is not near.

The rebuttal to this bad argument is:

If birds were as energy-inefficient as planes, they’d be disabled too, and would probably die quickly. Yet planes work fine. (See Table 1 from this AI Impacts page) Even if TAI is near, there are going to be lots of X’s that are important for the brain, that we don’t know how to make in AI yet, but that are either unnecessary for TAI or not too difficult to get once we have the other key variables. So even if TAI is near, I should expect to hear people going around pointing out various X’s and claiming that this is reason to think TAI is far away. You haven’t done anything to convince me that this isn’t what’s happening with X = data-efficiency.

However, I do think the argument can be reformulated and expanded to become good. Here’s a sketch, inspired by Ajeya Cotra’s argument here.

We probably can’t get TAI without figuring out how to make AIs that are as data-efficient as humans. It’s true that there are some useful tasks for which there is plenty of data--like call center work, or driving trucks--but AIs that can do these tasks won’t be transformative. Transformative AI will be doing things like managing corporations, leading armies, designing new chips, and writing AI theory publications. Insofar as AI learns more slowly than humans, by the time it accumulates enough experience doing one of these tasks, (a) the world would have changed enough that its skills would be obsolete, and/or (b) it would have made a lot of expensive mistakes in the meantime.

Moreover, we probably won’t figure out how to make AIs that are as data-efficient as humans for a long time--decades at least. This is because 1. We’ve been trying to figure this out for decades and haven’t succeeded, and 2. Having a few orders of magnitude more compute won’t help much. Now, to justify point #2: Neural nets actually do get more data-efficient as they get bigger, but we can plot the trend and see that they will still be less data-efficient than humans when they are a few orders of magnitude bigger. So making them bigger won’t be enough, we’ll need new architectures/algorithms/etc. As for using compute to search for architectures/etc., that might work, but given how long evolution took, we should think it’s unlikely that we could do this with only a few orders of magnitude of searching—probably we’d need to do many generations of large population size. (We could also think of this search process as analogous to typical deep learning training runs, in which case we should expect it’ll take many gradient updates with large batch size.) Anyhow, there’s no reason to think that data-efficient learning is something you need to be human-brain-sized to do. If we can’t make our tiny AIs learn efficiently after several decades of trying, we shouldn’t be able to make big AIs learn efficiently after just one more decade of trying.

I think this is a good argument. Do I buy it? Not yet. For one thing, I haven’t verified whether the claims it makes are true, I just made them up as plausible claims which would be persuasive to me if true. For another, some of the claims actually seem false to me. Finally, I suspect that in 1895 someone could have made a similarly plausible argument about energy efficiency, and another similarly plausible argument about flight control, and both arguments would have been wrong: Energy efficiency turned out to be insufficiently necessary, and flight control turned out to be insufficiently difficult!

Conclusion

What I am not saying: I am not saying that the case of birds and planes is strong evidence that TAI will happen once we hit the HBHL milestone. I do think it is evidence, but it is weak evidence. (For my all-things-considered view of how many orders of magnitude of compute it’ll take to get TAI, see future posts, or ask me.) I would like to see a more thorough investigation of cases in which humans attempt to design something that has an obvious biological analogue. It would be interesting to see if the case of flight was typical. Flight being typical would be strong evidence for short timelines, I think.

What I am saying: I am saying that many common anti-short-timelines arguments are bogus. They need to do much more than just appeal to the complexity/mysteriousness/efficiency of the brain; they need to argue that some property X is both necessary for TAI and not about to be figured out for AI anytime soon, not even after the HBHL milestone is passed by several orders of magnitude.

Why this matters: In my opinion the biggest source of uncertainty about AI timelines has to do with how much “special sauce” is necessary for making transformative AI. As jylin04 puts it,

A first and frequently debated crux is whether we can get to TAI from end-to-end training of models specified by relatively few bits of information at initialization, such as neural networks initialized with random weights. OpenAI in particular seems to take the affirmative view[^3], while people in academia, especially those with more of a neuroscience / cognitive science background, seem to think instead that we'll have to hard-code in lots of inductive biases from neuroscience to get to AGI [^4].

In my words: Evolution clearly put lots of special sauce into humans, and took millions of generations of millions of individuals to do so. How much special sauce will we need to get TAI?

Shorty is one end of a spectrum of disagreement on this question. Shorty thinks the amount of special sauce required is small enough that we’ll “work out the details” within a few years of having the key variables (size and training time). At the other end of the spectrum would be someone who thought that the amount of special sauce required is similar to the amount found in the brain. Longs is in the middle. Longs thinks the amount of special sauce required is large enough that the HBHL milestone isn’t particularly relevant to timelines; we’ll either have to brute-force search for the special sauce like evolution did, or have some brilliant new insights, or mimic the brain, etc.

This post rebutted common arguments against Shorty’s position. It also presented weak evidence in favor of Shorty’s position: the precedent of birds and planes. In future posts I’ll say more about what I think the probability distribution over amount-of-special-sauce-needed should be and why.

Acknowedgements: Thanks to my RA, Amogh Nanjajjar, for compiling the data and building the graph. Thanks to Kaj Sotala, Max Daniel, Lukas Gloor, and Carl Shulman for comments on drafts.

Appendix

Some footnotes:

- I didn’t say anything about why we might think size and training time are the key variables, or even what “key variables” means. Hopefully I’ll get a chance in the comments or in subsequent posts.

- I deliberately left vague what “training time” means and what “size” means. Thus, I’m not commiting myself to any particular way of calculating the HBHL milestone yet. I’m open to being convinced that the HBHL milestone is farther in the future than it might seem.

- Persuasion tools, even very powerful ones, wouldn’t be TAI by the standard definition. However they would constitute a potential-AI-induced-point-of-no-return, so they still count for timelines purposes.

- This 'How much special sauce is needed?' variable is very similar to Ajeya Cotra's variable 'how much compute would lead to TAI given 2020's algorithms.'

Some bookkeeping details about the data:

- This dataset is not complete. Amogh did a reasonably thorough search for engines throughout the period (with a focus on stuff before 1910) but was unable to find power or weight stats for many of the engines we heard about. Nevertheless I am reasonably confident that this dataset is representative; if an engine was significantly better than the others of its time, probably this would have been mentioned and Amogh would have flagged it as a potential outlier.

- Many of the points for steam engine power/weight should really be bumped up slightly. This is because most of the data we had was for the weight of the entire locomotive of a steam-powered train, rather than just the steam engine part. I don’t know what fraction of a locomotive is non-steam-engine but 50% seems like a reasonable guess. I don’t think this changes the overall picture much; in particular, the two highest red dots do not need to be bumped up at all (I checked).

- The birds bar is the power/weight ratio for the muscles of a particular species of bird, reported by this source, which reports the power/weight for a particular species of bird. Amogh has done a bit of searching and doesn’t think muscle power/weight is significantly different for other species of bird. Seems plausible to me; even if the average bird has muscles that are twice (or half) as powerful-per-kilogram, the overall graph would look basically the same.

- I attempted to find estimates of human muscle power-to-weight ratio; it gets smaller the more tired the muscles get, but at peak performance for fit individuals it seems to be about an order of magnitude less than bird muscle. (This chart lists power-to-weight ratio for human cyclists, which according to this are probably about half muscle, so look at the left-hand column and double it.) Interestingly, this means that the engines of the first flying machines were possibly the first engines to be substantially better than human flapping/pedaling as a source of flying-machine power.